2 Functional Description

This chapter provides a description of the Oracle Communications EAGLE Application Processor (EPAP) design, features, and user interfaces.

General Description

The EAGLE Application Processor (EPAP) platform, coupled with the Provisioning Database Application (PDBA), facilitates and maintains the database required by EPAP-related features. See the Glossary for a list of EPAP-related features. The EPAP serves two major purposes:

-

Accept and store data provisioned by the customer

-

Update customer provisioning data and reload databases on the Service Module cards in the Multi Purpose Server (MPS)

Operational Flow

The Multi Purpose Server (MPS) hardware platform supports high speed provisioning of large databases for the EAGLE. The MPS is composed of hardware and software components that interact to create a secure and reliable platform. MPS supports the EAGLE Provisioning Application Processor (EPAP).

During normal operation, information flows through the EPAP and PDBA with no intervention. Each EPAP has a graphical user interface that supports maintenance, debugging, and platform operations. The EPAP user interface includes a PDBA user interface for configuration and database maintenance. EPAP Graphical User Interface describes the EPAP and PDBA user interfaces. EPAP Software Configuration includes descriptions of the text-based user interface that performs initial EPAP configuration.

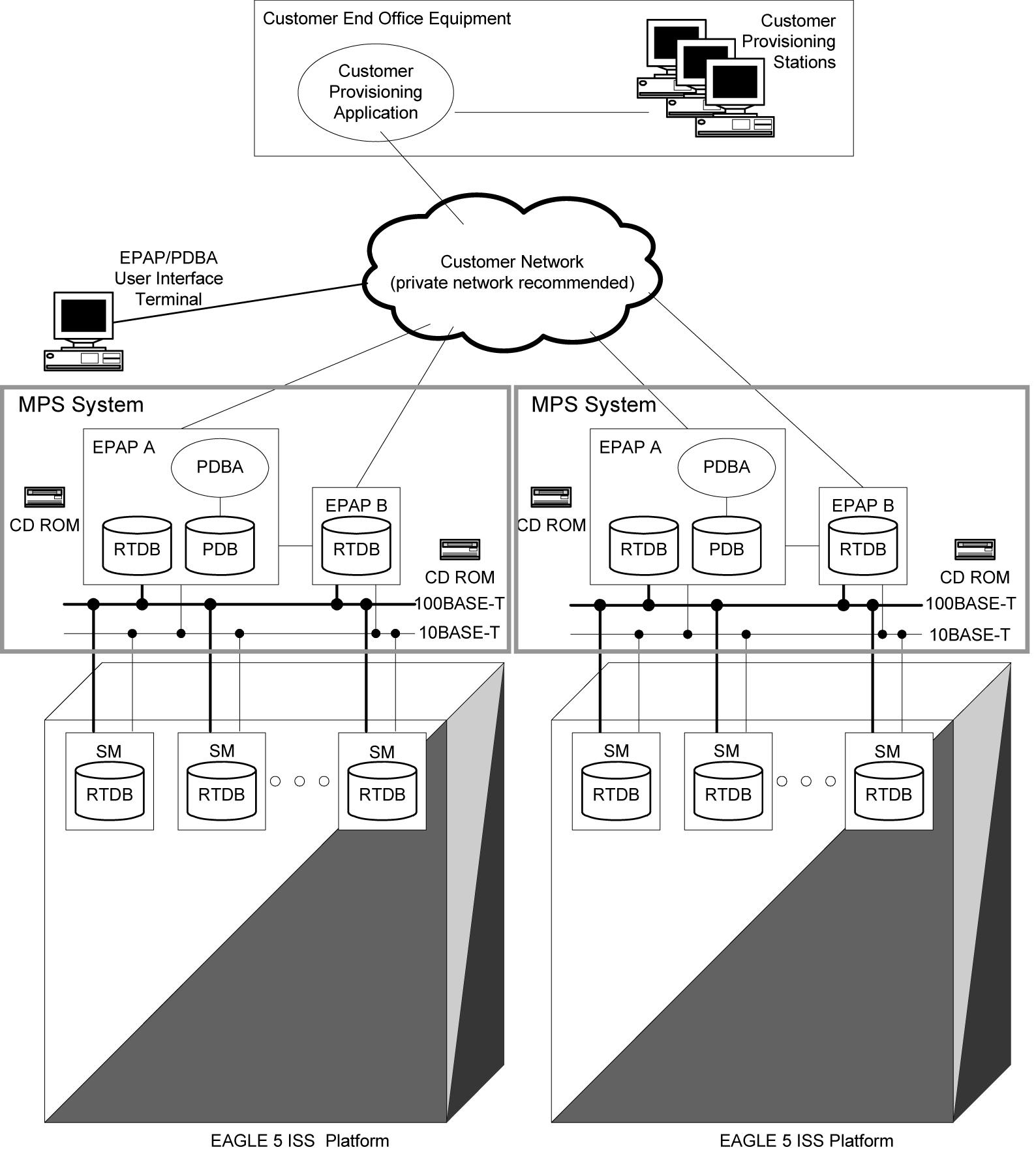

Figure 2-1 Mated EAGLE Platform Example

EPAP Types

Each EPAP can be configured as one of three types:

- Mixed EPAP - This configuration supports the Real Time Database (RTDB) and the Provisioning Database (PDB), along with the associated processes for both. Mixed EPAP is identified as an Oracle Communications EAGLE Application Processor Provisioning (EPAP). Mixed EPAP is described in Mixed EPAP. The majority of the content in this chapter and subsequent chapters applies to Mixed EPAP. EAGLE interfaces, Service Module cards, and Mate Servers are supported by Mixed EPAP.

- Non-Provisioning EPAP - This configuration is similar to Mixed EPAP, but supports only the Real Time Database (RTDB) and its associated processes. The Provisioning Database (PDB) is not supported by this configuration. Non-Provisioning EPAP is identified as an Oracle Communications EAGLE Application Processor Non-provisioning (EPAP). Content in this chapter and subsequent chapters which is PDB-related does not apply to the Non-Provisioning EPAP configuration.

- Standalone PDB EPAP - This configuration supports only the Provisioning Database (PDB) and its associated processes. The Real Time Database (RTDB), EAGLE interfaces, Service Module cards, Mate Servers, and associated processes are not supported by this configuration. Standalone PDB EPAP is identified as an Oracle Communications EAGLE Application Processor Provisioning (EPAP). Content in this chapter and subsequent chapters which is RTDB-related or requires a Mate server or EAGLE interface does not apply to this configuration. Refer to Standalone PDB EPAP for specific information about the Standalone PDB EPAP configuration.

Mixed EPAP

An EPAP system consists of two mated EPAP processors (A and B) installed as part of an EAGLE. A set of Service Module cards is part of the EAGLE. Each Service Module card stores a copy of the Real Time Database (RTDB).

The main and backup DSM networks are two high-speed Ethernet links, which connect the Service Module cards and the EPAPs. Another Ethernet link connects the two EPAPs and is identified as the EPAP Sync network.

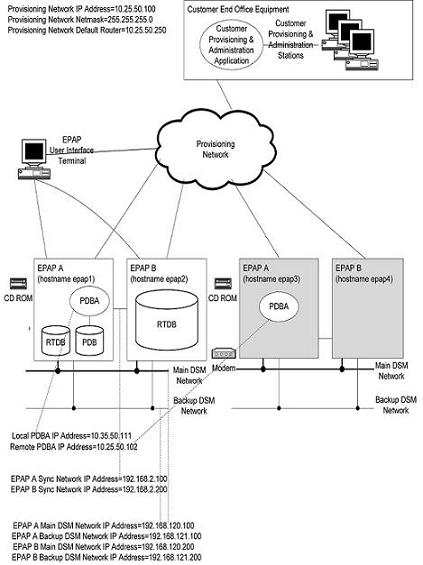

Figure 2-2 shows the network layout and examples of typical IP addresses of the network elements. The shaded portion represents a second EAGLE and mated EPAPs deployed as a mated EAGLE.

The EPAP system maintains the Real Time Database (RTDB) required to provision the EAGLE Service Module cards, and maintains redundant copies of both databases on each mated EPAP.

One EPAP runs as the Active EPAP and the other as the Standby EPAP. In normal operation, the Service Module card database is provisioned through the main DSM network by the Active EPAP.

If the Active EPAP fails, the Standby EPAP takes over the role of Active EPAP and continues to provision the database. If the main DSM network fails completely and connectivity is lost for all EAGLE Service Modules cards, the Active EPAP switches to the backup DSM network to continue provisioning the Service Module cards. Any failure which has a limited impact on database provisioning will not automatically trigger a switchover to the backup DSM network. At any given time, only one Active EPAP uses one DSM network per EPAP system.

The Provisioning Multiple EPAPs Support feature provides the capability to connect to a single active provisionable EPAP A that is used to provision up to 22 non-provisionable EPAP systems. For more information about this feature, see Provisioning Multiple EPAPs Support.

Figure 2-2 Example of EPAP Network IP Addresses

EPAP Switchover

EPAPs assume an Active or a Standby role through negotiation and algorithm. This role affects how the EPAP handles its various external interfaces. External provisioning is allowed only through the Active EPAP. Only the Active EPAP can provide maintenance information to EAGLE. The EPAP role also plays an important part in design details of the individual software components. The EPAP role does not affect the Active/Standby role of the PDBA.

An EPAP can switch from an Active to a Standby role under the following conditions:

- The EPAP maintenance component becomes isolated from the maintenance component on the mate EPAP and from EAGLE.

The maintenance subsystem has attempted and failed to establish communication with each of these:

- the mate maintenance task across the EPAP Sync network

- the mate maintenance task across the main DSM network

- any Service Module card on any DSM network

- The RTDB becomes corrupt.

- All of the RMTP channels have failed.

- A fatal software error occurred.

- The EPAP is forced to Standby by the user interface Force to Become Standby operation.

If the Active EPAP has one or more of the five switchover conditions and the Standby EPAP does not, a switchover will occur. Table 2-1 lists the possible combinations.

Note:

In the absence of Switch 1B (i.e., when switch 1B is down), the EPAP Sync network will not be available. In this scenario, if the EPAP software is restarted, it may cause both EPAPs (A and B) to go in 'Active' state, causing problems with EAGLE SM cards provisioning.Table 2-1 EPAP Switchover Matrix

| Active state | Standby state | Event | Switchover? |

|---|---|---|---|

|

No switchover conditions |

No switchover conditions |

Condition occurs on Active |

Yes |

|

Switchover conditions exist |

Switchover conditions exist |

Conditions clear on Standby; switches to Active |

Yes |

|

No switchover conditions |

Switchover conditions exist |

Condition occurs on Active |

No |

|

Switchover conditions exist |

Switchover conditions exist |

Condition occurs on Active |

No |

|

Switchover conditions exist |

Switchover conditions exist |

Condition occurs on Standby |

No |

|

Switchover conditions exist |

Switchover conditions exist |

Conditions clear on Active |

No |

The following are exceptions to the switchover matrix:

- If the mate maintenance component cannot be contacted and the mate EPAP is not visible on the DSM networks, the EPAP assumes an Active role if any DSMs are visible on the DSM networks.

- If the EPAP GUI menu item is used to force an EPAP to Standby role, no condition will cause it to become Active until the user removes the interface restriction with another menu item. See Force Standby and Change Status.

If none of the Standby conditions exist for either EPAP, the EPAPs will negotiate an Active and a Standby. The mate will be considered unreachable after two seconds of attempted negotiation.

For information about the effect of asynchronous replication on switchover, see Asynchronous Replication Serviceability Considerations.

EPAP Component Overview

The major components that run on the Oracle Communications EAGLE Application Processor Provisioning ( EPAP) and Oracle Communications EAGLE Application Processor Non-provisioning ( EPAP) are shown in the following table.

Table 2-2 Major Component Tasks

| Task | EPAP Provisioning | EPAP Non-provisioning | Standalone PDB EPAP |

|---|---|---|---|

|

Provisioning Database Application (PDBA) |

X | X | |

|

Provisioning Database (PDB) |

X | X | |

|

Maintenance task |

X | X | X |

|

Real Time Database (RTDB) |

X | X | |

|

RTDB Audit |

X | X | |

|

DSM Provisioning |

X | X | |

|

EPAP Service Module Database Levels Monitor (EDLM) |

X | X |

The PDBA task writes customer data into the PDB, which is reformatted to facilitate fast lookups. After conversion, the data is written to the RTDB.

The PDB is the golden copy of the provisioning database. The database records are continuously updated to the PDB from the customer network. The customer uses the Provisioning Database Interface (PDBI) to transfer data over the customer network to the EPAP PDBA. The subscription and entity object commands used by PDBI are described in Provisioning Database Interface User's Guide.

The Maintenance task is responsible for reporting the overall stability and performance of the system. The maintenance task communicates status and alarm information to the primary Service Module card.

One EPAP is equipped with both the PDB and RTDB views of the database. The mate EPAP has only the RTDB view. An EPAP with only the RTDB view must be updated by an EPAP that has the PDB view.

The Service Module card database can go out of sync (become incoherent) due to missed provisioning or card reboot. Out-of-sync Service Module cards are reprovisioned from the RTDB on the Active EPAP. The RTDB audit runs as part of the RTDB task.

The DSM provisioning task resides on both EPAP A and EPAP B. The DSM provisioning task communicates internally with the RTDB task and the EPAP maintenance task. The DSM provisioning task uses Reliable Multicast Transport Protocol (RMTP) to multicast provisioning data to connected Service Module cards across the two DSM networks.

The EPAP SM Database Levels Monitor (EDLM) continuously monitors the current database level of all active (in-service) Service Module Cards on EAGLE. If all of the Service Module Cards stop receiving database updates from the Active EPAP server, EDLM automatically resets the RMTP channels at the EPAP end. This results in a re-established connection with the Service Module Cards on EAGLE.

Provisioning Database Interface

Provisioning clients connect to the EPAPs through the Provisioning Database Interface (PDBI). The PDBI provides commands that communicate provisioning information from the customer database to the Provisioning Database (PDB) in the Active PDBA on EAGLE. The customer issues provisioning commands using a provisioning application. This application uses the PDBI request/response messages to communicate with the EPAP Provisioning Database Application (PDBA) over the customer network. The PDBI is described in Provisioning Database Interface User's Guide.

Network Connections

This section describes the four types of EPAP network connections.

-

SM Networks

-

EPAP Sync Network

-

Customer Network

SM Networks

The SM networks carry provisioning data from the Real Time Databases (RTDBs) on the EPAP to the RTDBs on the Service Module cards. The networks also carry reload and maintenance traffic to the Service Module cards. Each network connects EPAP A and EPAP B to each Service Module card on a single EAGLE platform.

The EAGLE supports more than one model of Service Module card. The cards differ in the size of database and the transactions/second rate that they support. In this manual, the term Service Module card is used to indicate any model of Service Module card, unless a specific model is mentioned. For more information about the supported Service Module card models, refer to Hardware Reference.

Network speeds when installed Service Module cards are a mix of E5-SM4G cards and E5-SM8G-B cards:

-

Main SM network - 1 Gbps (1000 Mbps), full duplex

-

Backup SM network - 1 Gbps (1000 Mbps), full duplex

Refer to the "Switch Configuration" procedure in Upgrade/Installation Procedure for EPAP.

The first two octets of the EPAP network addresses for this network are 192.168. These are the first two octets for private class C networks as defined in RFC 1597. The fourth octet of the address is selected as follows:

-

If the EPAP is configured as EPAP A, the fourth octet has a value of 100.

-

If the EPAP is configured as EPAP B, the fourth octet has a value of 200.

Table 2-3 summarizes the derivation of each octet.

The configuration menu of the EPAP user interface contains menu items for configuring the EPAP network addresses. See EPAP Configuration Menu.

Table 2-3 IP Addresses on the SM Network

| Octet | Derivation |

|---|---|

|

1 |

192 |

|

2 |

168 |

|

3 |

Usually configured as:

|

|

4 |

100 for EPAP A 200 for EPAP B 1 - 32 for SM networks |

EPAP Sync Network

The EPAP Sync network is a point-to-point network between the MPS servers. This network provides a high-bandwidth dedicated communication channel for MPS data synchronization. This network operates at full-duplex Gigabit Ethernet speed.

The first two octets of the EPAP IP addresses for the Sync network are 192.168. These are the first two octets for private class C networks as defined in RFC 1597.

The third octet for each EPAP Sync network address is set to 2 as the default. This octet value can be changed using the option 2 in Configure Network Interfaces Menu.

The fourth octet of the EPAP Sync network IP address is 100 for EPAP A, and 200 for EPAP B.

Customer Network

The customer network, or provisioning network, carries the following traffic:

-

Customer queries and responses to the PDB (using PDBI)

-

Updates between PDBAs on mated EPAP systems

-

Updates between PDBAs and RTDBs when the PDBA and the RTDB are not on the same platform - This occurs if the RTDBs on one EPAP system cannot communicate with their local PDBA. These RTDBs would then attempt to communicate with the PDBA on the mate EPAP system.

-

RTDB reload traffic if the Active PDBA is not located on the same EAGLE as the RTDB - This occurs if the RTDBs on one EPAP system cannot communicate with their local PDBA. These RTDBs would then attempt to communicate with the PDBA on the mate EPAP system.

-

PDBA import/export traffic (file transfer)

-

Traffic from a PDBA reloading from its mate

-

EPAP and PDBA user interface traffic

A dedicated network is recommended, but unrelated customer traffic can also use this network.

Network Time Protocol (NTP)

The Network Time Protocol (NTP) is an Internet protocol that is used to synchronize clocks of computers to Universal Time Coordinated (UTC) as a time reference. NTP reads the clock of a time server and transmits the result to one or more clients. Each client adjusts its own clock as required. NTP ensures accurate local timekeeping with regard to radio, atomic, or other clocks located on the Internet. This protocol is capable of synchronizing distributed clocks within milliseconds over extended time periods. Without a synchronization protocol, the system time of Internet servers will drift out of synchronization with each other.

The MPS A server of each mated MPS pair is configured by default as a free-running NTP server that communicates with the mate MPS servers on the provisioning network. Free-running refers to a system that is not synchronized to UTC. A free-running system runs on its own clocking source. This allows mated MPS servers to synchronize their clocks

All MPS servers running the EPAP application can be configured through the EPAP

GUI to communicate and synchronize time with a

customer-defined NTP time server. The prefer keyword is

used to prevent clock-hopping when additional MPS servers or NTP servers are

defined.

The core MPS platform provides a default NTP configuration file. MPS configuration includes adding ntppeerA and ntppeerB NTP hostname aliases to the /etc/hosts file.

If the network is equipped with firewalls, configure the firewalls to pass NTP protocol on IP port 123 (both TCP and UDP) between the MPS servers and the NTP servers or peers. The ntpdate program uses TCP while the ntpd program uses UDP.

Understanding Universal Time Coordinated (UTC)

Universal Time Coordinated (UTC) is an official standard for determining current time. The UTC is based on the quantum resonance of the cesium atom. UTC is more accurate than Greenwich Mean Time (GMT), which is based on solar time.

The universal in UTC means that the time can be used anywhere in the world and is independent of time zones. To convert UTC to local time, add or subtract the same number of hours used to convert GMT to local time. The coordinated in UTC means that several institutions contribute their estimate of the current time. UTC is calculated by combining these estimates.

UTC is disseminated by various means, including radio and satellite navigation systems, telephone modems, and portable clocks. Special-purpose receivers are available for time-dissemination services, including Global Position System (GPS) and other services operated by various national governments.

Because equipping every computer with a UTC receiver is too costly and inconvenient, a subset of computers can be equipped with receivers to relay the time to a number of clients connected by a common network. Some of these clients can disseminate the time, in which case these clients become lower stratum servers.

Understanding Network Time Protocol

Network Time Protocol (NTP) primary servers provide time to their clients that is accurate within a millisecond on a Local Area Network (LAN) and within a few tens of milliseconds on a Wide Area Network (WAN). This first level of accuracy is called stratum-1. At each stratum, the client can also operate as a server for the next stratum. A hierarchy of NTP servers is defined with several strata to indicate how many servers exist between the current server and the original time source external to the NTP network:

-

A stratum-1 server has access to an external time source that directly provides a standard time service, such as a UTC receiver.

-

A stratum-2 server receives its time from a stratum-1 server.

-

A stratum-3 server receives its time from a stratum-2 server.

-

This NTP network hierarchy can continue up to a stratum-15 server which receives its time from a stratum-14 server.

Client workstations do not usually operate as NTP servers. NTP servers with a relatively small number of clients do not receive their time from a stratum-1 server. At each stratum, redundant NTP servers and diverse network paths are required to protect against failing software, hardware, or network links. NTP works in one or more of these association modes:

-

Client/server mode - A client receives synchronization from one or more servers, but does not provide synchronization to the servers.

-

Symmetric mode - Either of two peer servers can synchronize to the other to provide mutual backup.

-

Broadcast mode - Many clients synchronize to a single server or to a few servers. This mode reduces traffic in networks that contain a large number of clients. IP multicast can be used when the NTP subnet spans multiple networks.

The Tekelec MPS servers are configured to use the symmetric mode to share their time with their mate MPS servers. For an EPAP system, MPS servers are also configured to share their time with their remote PDBA server.

ITU Duplicate Point Code Support

The EPAP Support of EAGLE ITU Duplicate Point Code feature allows point codes to be provisioned in the PDB using a two-character group code. This feature works with the EAGLE ITU Duplicate Point Code feature, which allows an EAGLE mated pair to route traffic for two or more countries with overlapping (identical) point code values. The EPAP Support of ITU Duplicate Point Code feature allows group codes to be entered for Network Entity (SP or RN) point codes using the PDBI. The PDBI supports the provisioning of a two-character group code having a suffix to a point code of a PDBI network entity.

Note:

The EAGLE ITU Duplicate Point Code feature (ITUDUPPC) must be enabled and turned on to use the EPAP Support of ITU Duplicate Point Code feature. For information about enabling or turning on any feature in the EAGLE, refer to thechg-feat command in Commands User's Guide.

For example, a point code of 1-1-1 can be provisioned in the PDBI as 1-1-1-ab, where 1-1-1 is the true point code and ab is the group code. This usage allows the EAGLE to discriminate between two nodes in different countries with the same true point code. The EAGLE uses the group code to distinguish between the two nodes. The group code is used internally in the EAGLE only and is assigned to an incoming message based on the linkset on which it was received.

For example, Figure 2-3 shows a network that includes two countries, Country 1 and Country 2. Both countries have SSPs with a point code value of 2047.

Figure 2-3 Network Example with DPC and Group Codes

Users must divide their ITU-National destinations into groups. These groups are usually based on the country. However, one group could have multiple countries within it, or a single country could be divided into multiple groups. The requirements for these groups are:

-

No duplicate point codes are allowed within a group.

-

ITU-National traffic from a group must be destined for a PC within the same group.

-

The user must assign a unique two-letter group code to each group.

In the network example shown in Figure 2-3, Country 1 can have only one point code with a value of 2047. Traffic coming from SSP 2047 in Country 1 can be destined only to other nodes within Country 1. In this network example, the user assigns a group code of ab to Country 1, and a group code of cd to Country 2.

When the user enters an ITU-National point code, he or she must also enter the group code, using the format “point code - group code”. This group code must be used for any command that uses an ITU-N point code.

The ITU Duplicate Point Code Support feature for EPAP and PDBI allows group codes to be entered for a Network Entity (SP or RN) point codes via PDBI commands. These commands are described in Provisioning Database Interface User's Guide.

The PDBI supports the provisioning of a two-character group code suffixed to a point code of a PDBI Network Entity (NE). You can provision a group code to any valid NE, for example, RN or SP.

Note:

The PDB does not check for uniqueness of point codes provisioned into the PDB-based feature databases.All routing for PDB-based features specify group codes stored with the NE's point codes in accord with the EAGLE Duplicate Point Code feature.

Note:

This should already be the case for the protocol side of these features, but you should system test it when provisioning to the PDB with group codes to ensure the group code is being used by the features for message relay.For more information about group codes, refer to Group Codes in Database Administration - SS7 User's Guide.

Asynchronous Replication

Asynchronous replication is the method used to synchronize the various Provisioning Databases ( PDBs) in a client network. Asynchronous replication means that the active PDB receives an update, commits to accept the change, and returns a code to the client indicating success or failure. This series of actions occurs before the active PDB forwards the update to the replicated database, which is the standby PDB.

Only successful updates are replicated. As a result, the response turnaround on the active PDB is shortened, and the overhead required to mantain database synchronization is reduced.

Potential Lag Introduced by Asynchronous Replication

Lag means that the database level of the standby PDB is lower than the database level of the active PDB. With any asynchronous data replication scheme, the receivers of replicated data may lag behind. The standby PDB and, depending upon the homing policy in effect, the RTDB applications are susceptible to lag.

During continual provisioning traffic, the active PDB is expected to be a small number of levels ahead of the standby PDB. Also, any RTDB that is homed to the standby PDB is expected to have a level lower than the active PDB. For more information, refer to Selective Homing of EPAP RTDBs.

Asynchronous Replication Alarms

If the replication lag between PDB database levels increases to a value deemed unacceptable by EPAP, the following alarms are raised.

-

PDBA Replication Failure (REPLERR) is a major alarm that indicates a failure of PDBA replication. The user must contact My Oracle Support.

-

Standby PDBA Falling Behind is a minor alarm that signals that one EAGLE of the pair may have received updates at a longer interval than the other EAGLE. This alarm condition does not indicate data loss or corruption.

EPAP Security Enhancements

The EPAP Security Enhancements feature controls access to an EPAP Graphical User Interface (GUI) to specific IP addresses. The specified allowed IP addresses are stored in an EPAP list and can be added to, deleted from, and retrieved only by an authorized user. The EPAP Security Enhancements feature also allows an authorized user to use the GUI to toggle on and off the IP authorization checking.

The administrator or a user with IP action privileges can add, delete, and retrieve IP addresses. Deleting an IP results in that IP address no longer residing in the IP table, hence preventing the IP address from being able to connect to an EPAP. While each of the IP action privileges can be assigned to any individual user, the add and delete IP action privileges should be granted to only those users who are knowledgeable about the customer network.

The ability to add, delete, and retrieve client IP addresses and to toggle IP authorization checking is assignable by function. This ability is accessible through the EPAP GUI. Refer to Authorized IPs. The IP mechanism implemented in this feature provides the user with enhanced EPAP privilege control.

The EPAP Security Enhancements feature is available through the EPAP GUI and is available initially to only the administrator. The ability to view IP addresses on the customer's network is a security consideration and should be restricted to users with administration group privileges. In addition, privileged users can prepare a custom message to replace the standard 403 Forbidden site error message.

IP access and range constraints provided by the web server and the EPAP Security Enhancement feature cannot protect against IP spoofing, which refers to the creation of TCP/IP packets using another's IP address. IP spoofing is IP impersonation or misrepresentation. The customer must rely on the security of their intranet network to protect against IP spoofing.

EPAP maintains a list of the IP addresses authorized to access the EPAP GUI. Only requests from IP addresses on the authorized list can connect to the EPAP GUI. Attempts from any unauthorized address are rejected.

IP addresses are not restricted from accessing the EPAP GUI until the administrator toggles IP authorization to enabled. When IP authorization checking is enabled, any IP address not present in the IP authorization list will be refused access to the EPAP GUI.

The EPAP Security Enhancements feature also provides the ability to enable and disable the IP address list after the list is provisioned. If the list is disabled, the provisioned IP addresses are retained in the database, but access is not blocked from the IP addresses that are not on the list. The EPAP GUI restricts permission to enable and disable the IP address list to specific user names and passwords.

The IP actions for adding, deleting, and retrieving authorized IP addresses and for toggling IP authorization checking are available from only the EPAP GUI, as described in EPAP Graphical User Interface, and are not available from the EPAP text-based user interface.

For additional security, kernel parameters in the etc/sysctl.conf fileare set to reduce the possibility of against network attacks and security breaches.

Backup Provisioning Network Interface

The Backup Provisioning Network Interface feature adds an alternative connection for redundancy between the EPAP A server and the customer’s Provisioning Database Interface (PDBI). This additional interface provides a backup path for the PDBI to continue communicating with the EPAP A if the primary connection is lost.

Note:

If the EPAP A is connected with a Standby PDB and non-provisioning nodes, the nodes will not be updated. These nodes will have to wait until the main Provisioning Network of the EPAP A is UP. The customer can provision Backup IP Addresses in the Standby and Non-provisioning nodes.A PDBI client normally uses port eth0 on the active EPAP to provision the PDB, using the Configure Provisioning Network option 1 of the Configure Network Interfaces Menu. If a failure occurs in the normal connection, the Backup Provisioning Network Interface feature allows the customer to use secondary port eth4, using option 4 of the Configure Network Interfaces Menu. No automatic switchover occurs. After the customer observes the communications failure, the customer performs a manual switch to begin addressing the secondary port defined by the configuration procedure.

The Configure Network Interfaces Menu (see Configure Network Interfaces Menu) describes how to configure the primary and secondary provisioning network interface connections. For more information about configuring a Backup Provisioning Network, refer to Configure Backup Provisioning Network.

Provisioning Multiple EPAPs Support

The Provisioning Multiple EPAPs Support feature provides the ability for a single PDBI connection to provision up to four MPSs. Each MPS contains an EPAP A and an EPAP B). The PDBI connects to and provisions an EPAP A and a Provisioning Database (PDB). The remaining three MPSs are automatically provisioned from the active EPAP A. This allows users to add additional EAGLEs without changing their provisioning systems and without provisioning the EAGLEs from multiple sources.

The Provisioning Multiple EPAPs Support feature is transparent to the PDBI clients. PDBI clients can provision data in the same manner regardless of whether provisioning a single MPS pair or multiple MPS pairs. The PDBI client connects to one PDB for provisioning. The remaining MPSs in the customer network automatically remain synchronized. EPAP software updates the RTDBs at the additional sites.

This feature does not affect which PDBA the RTDBs connect to for receiving updates. Receiving updates continues to be under the control of the EPAP user interface.

The two MPSs that contain the PDB are identified as provisionable because the customer provisioning application connects to and updates these sites. The remaining MPSs are identified as non-provisionable. Figure 2-4 shows a view of the provisionable and non-provisionable EPAPs.

Figure 2-4 Support for Provisioning Multiple EPAPs

Selective Homing of EPAP RTDBs

The Selective Homing of EPAPRTDBs feature allows users to select the PDB from which updates are received. The homing selection is an option at EPAP configuration. Users can choose whether the RTDBs on an MPS node receive updates by one of the following methods:

-

IP address, a specific PDBA process, which may be active or standby

-

PDB state, the active or standby PDBA process, which may or may not be local

This feature permits all RTDBs within an MPS system, which includes both nodes of a mated pair or multiple nodes within several mated pairs, to always receive updates from a specific PDB, the active PDB, or the standby PDB. Updates are always be received from the selected PDBA process, regardless of whether the PDBA is the local PDBA or remote PDBA.

An EPAP configuration option allows the user to select whether the RTDB of an EPAP will normally receive updates. The RTDB is homed to a specific PDBA, the active PDBA , or the standby PDBA. If the user selects specific homing for the RTDB, that RTDB will receive updates from the specified PDBA, regardless of whether the PDBA is active or standby.

If the RTDB cannot communicate with the specified PDBA, the RTDB will automatically begin to receive updates from its alternate PDBA. Before the Selective Homing of EPAP RTDBs feature, updates were received from the remote PDBA.

The homing of each RTDB is independently selectable and allows some RTDBs to be homed to their local PDBA, to the active PDBA, or to the standby PDBA.

Terminology used in Configuration Descriptions

- specific PDBA

- Specific PDBA is used instead of local PDBA because the architecture can result in an MPS without a PDB on EPAP A. In this case, the RTDBs on that node have no local PDBA. Selective homing specifies the IP addresses of the MPSs with the first and second choices of PDBA. In a two-node MPS system, this corresponds directly to local homing. With more than two nodes, the user selects a specific PDBA without designating the PDBA as local or remote.

- active PDBA

- Active PDBA is the PDBA selected by the user to receive updates from the user’s provisioning system using the PDBI.

- remote PDBA

- For a given RTDB, the remote PDBA is a PDBA on a different MPS node. This PDBA may or may not be the active PDBA.

- preferred PDB

- Preferred PDB is the PDB selected by the RTDB. The RTDB is homed to the preferred PDB. For more about standby PDB homing, see Asynchronous Replication Serviceability Considerations. When specific RTDB homing has been selected, the RTDB will receive updates from the alternate PDB if the preferred PDB is unreachable.

- alternate PDB

- Alternate PDB is the PDB that is not selected. When either active or standby PDB homing is selected, the RTDB has the option to receive updates from the alternate PDB if the preferred PDB is unreachable.

- active homing

- If the user selects active homing for the RTDB, that RTDB always receives updates from the active PDBA. If the RTDB loses its connection with the active PDBA, the RTDB will automatically begin to receive updates from a standby PDBA; the reverse is true for ‘standby’ homing. This automatic switchover is a configurable option. See Switchover PDBA State.

Possible RTDB Configurations

These are the possible configurations of each RTDB in the system. Each configuration is also described in detail in the following sections.

Specific PDB Homing with Alternate PDB

This RTDB configuration specifies the IP address of the PDB from which the RTDB receives updates. If the specified PDB is not reachable, the RTDB receives updates from the alternate PDB. Table 2-4 shows the condition of each possible connection to the PDB from the RTDB perspective. The shaded cells indicate the PDB from which the RTDB receives updates.

Table 2-4 Specific PDB Homing with Alternate PDB (RTDB Configuration 1)

| Site 1 PDB | Site 2 PDB | RTDB Data Source (Note 2) | ||

|---|---|---|---|---|

| Reachable? | State | Reachable? | State | |

|

Yes |

Active |

Yes |

Standby |

Site 1 PDB |

|

Yes |

Standby |

Yes |

Active |

Site 1 PDB |

|

Yes |

Active |

No |

- |

Site 1 PDB |

|

Yes |

Standby |

No |

- |

Site 1 PDB |

|

No |

- |

Yes |

Active |

Site 2 PDB |

|

No |

- |

Yes |

Standby |

Site 2 PDB |

|

No |

- |

No |

- |

None |

|

Note 1: Site 1 = Preferred PDB |

Site 2 = Alternate PDB |

|||

|

Note 2: PDB from which the RTDB receives updates |

||||

See RTDB Homing Considerations to determine if this configuration is the most appropriate match for your installation.

Active PDB Homing with Alternate PDB

This RTDB configuration specifies that the RTDB receives updates from the active PDB. If the active PDB is not reachable, the RTDB receives updates from the standby PDB. Table 2-5 shows the condition of each possible connection to the PDB from the RTDB perspective. The shaded boxes indicate the PDB from which the RTDB receives updates.

Table 2-5 Active PDB Homing with Alternate PDB (RTDB Configuration 2)

| Site 1 PDB | Site 2 PDB | RTDB Data Source (Note 2) | ||

|---|---|---|---|---|

| Reachable? | State | Reachable? | State | |

|

Yes |

Active |

Yes |

Standby |

Site 1 PDB |

|

Yes |

Standby |

Yes |

Active |

Site 2 PDB |

|

Yes |

Active |

No |

- |

Site 1 PDB |

|

Yes |

Standby |

No |

- |

Site 1 PDB |

|

No |

- |

Yes |

Active |

Site 2 PDB |

|

No |

- |

Yes |

Standby |

Site 2 PDB |

|

No |

- |

No |

- |

None |

|

Note 1: Active = Preferred PDB |

Standby = Alternate PDB |

|||

|

Note 2: PDB from which the RTDB receives updates |

||||

See RTDB Homing Considerations to determine if this configuration is the most appropriate match for your installation.

Active PDB Homing without Alternate PDB

This RTDB configuration specifies that the RTDB receives updates from the active PDB. No alternate PDB is specified. Table 2-6 shows the condition of each possible connection to the PDB from the RTDB perspective. The shaded boxes indicate the PDB from which the RTDB receives updates.

Table 2-6 Active PDB Homing without Alternate PDB (RTDB Configuration 3)

| Site 1 PDB | Site 2 PDB | RTDB Data Source (Note 2) | ||

|---|---|---|---|---|

| Reachable? | State | Reachable? | State | |

|

Yes |

Active |

Yes |

Standby |

Site 1 PDB |

|

Yes |

Standby |

Yes |

Active |

Site 2 PDB |

|

Yes |

Active |

No |

- |

Site 1 PDB |

|

Yes |

Standby |

No |

- |

None |

|

No |

- |

Yes |

Active |

Site 2 PDB |

|

No |

- |

Yes |

Standby |

None |

|

No |

- |

No |

- |

None |

|

Note 1: Active = Preferred PDB |

Standby = Alternate PDB |

|||

|

Note 2: PDB from which the RTDB receives updates |

||||

See RTDB Homing Considerations to determine if this configuration is the most appropriate match for your installation.

Standby PDB Homing with Alternate PDB

This RTDB configuration specifies that the RTDB receives updates from the standby PDB. If the standby PDB is not reachable, the RTDB receives updates from the active PDB. Table 2-7 shows the condition of each possible connection to the PDB from the RTDB perspective. The shaded boxes indicate the PDB from which the RTDB will receive updates.

Table 2-7 Standby PDB Homing with Alternate PDB (RTDB Configuration 4)

| Site 1 PDB | Site 2 PDB | RTDB Data Source (Note 2) | ||

|---|---|---|---|---|

| Reachable? | State | Reachable? | State | |

|

Yes |

Standby |

Yes |

Active |

Site 1 PDB |

|

Yes |

Active |

Yes |

Standby |

Site 2 PDB |

|

Yes |

Standby |

No |

- |

Site 1 PDB |

|

Yes |

Active |

No |

- |

Site 1 PDB |

|

No |

- |

Yes |

Standby |

Site 2 PDB |

|

No |

- |

Yes |

Active |

Site 2 PDB |

|

No |

- |

No |

- |

None |

|

Note 1: Standby = Preferred PDB |

Active = Alternate PDB |

|||

|

Note 2: PDB from which the RTDB receives updates |

||||

See Asynchronous Replication Serviceability Considerations to determine if this configuration is the most appropriate match for your installation.

Standby PDB Homing without Alternate PDB

This RTDB configuration specifies that the RTDB receives updates from the standby PDB. No alternate PDB is specified. Table 2-8 shows the condition of each possible connection to the PDB from the RTDB perspective. The shaded boxes indicate the PDB from which the RTDB receives updates.

Table 2-8 Standby PDB Homing without Alternate PDB (RTDB Configuration 5)

| Site 1 PDB | Site 2 PDB | RTDB Data Source (Note 2) | ||

|---|---|---|---|---|

| Reachable? | State | Reachable? | State | |

|

Yes |

Standby |

Yes |

Active |

Site 1 PDB |

|

Yes |

Active |

Yes |

Standby |

Site 2 PDB |

|

Yes |

Standby |

No |

- |

Site 1 PDB |

|

Yes |

Active |

No |

- |

None |

|

No |

- |

Yes |

Standby |

Site 2 PDB |

|

No |

- |

Yes |

Active |

None |

|

No |

- |

No |

- |

None |

|

Note 1: Standby = Preferred PDB |

Active = Alternate PDB |

|||

|

Note 2: PDB from which the RTDB receives updates |

||||

See Asynchronous Replication Serviceability Considerations to determine if this configuration is the most appropriate match for your installation.

General Homing Considerations

The Selective Homing of EPAP RTDBs feature requires additional configuration during installation and when new non-provisionable nodes are added. The configuration of all affected sites must be planned before the installation process begins.

Each MPS must be configured as provisionable or non-provisionable. Each network has two provisionable MPSs. (See Figure 2-4.) These MPSs must then be configured with a replicated PDB, which is described in EPAP Software Configuration. If non-provisionable MPSs are used, the non-provisionable MPSs are added after the provisionable MPSs are installed and configured.

Before the first MPS can be installed, the answers to these questions must be available:

-

How many MPSs are involved?

-

Which sites will be provisionable?

-

How will each RTDB be homed?

For the configuration on each site, the answers to these questions must be available:

-

What are the IP addresses of the A sides of the two provisionable sites?

-

What is the RTDB homing policy for this site?

RTDB Homing Considerations

Although RTDB homing allows a wide variety of configurations, two overall configurations cover the needs of most customers.

-

Configuration for Load Sharing and High Availability

In this configuration, all RTDBs are configured for specific RTDB homing. The alternate PDB is an acceptable provisioning source if the preferred PDB is unavailable. The RTDBs at the provisionable MPS prefer the local PDB. The remaining non-provisionable MPSs are divided evenly to prefer one PDB or the other. See Specific PDB Homing with Alternate PDB for more information on this RTDB Homing configuration.

-

Configuration for Deterministic Provisioning

In this configuration, all RTDBs are configured for active homing. The alternate PDB is not an acceptable provisioning source. See Active PDB Homing without Alternate PDB for more information about this RTDB homing configuration.

Asynchronous Replication Serviceability Considerations

The type of RTDB homing policy selected affects serviceability by the user. Tekelec recommends for asynchronous PDB replication that Standby PDB Homing (RTDB configuration 4 or 5) is used. Although this policy may result in a slightly longer propagation time for updates from the PDB to the RTDB, the increased delay is beneficial in disaster recovery situations.

Keeping the RTDB homed to the standby PDB ensures that, except for external intervention, every level present in the RTDB is also present in both PDBs. Both active and specific RTDB homing methods will continue to be valid. The homing methods provide proper function under all normal operating circumstances. Under these homing policies, the RTDB can possibly reach a database level that is higher than the PDB to which it may home to in response to a PDBA switchover. If this occurs, the RTDB must be recreated from the PDB to which the RTDB currently points.

Active and specific homing may create complications in disaster situations. For instance, if a failure forces the active PDB to become unavailable for a non-trivial amount of time and the user forces switchover to Standby (bypassing the protocol that syncs the databases prior to switchover), the dataset of the RTDB can possibly conflict with the dataset of the only remaining PDB. This situation requires a RTDB reload from the remaining PDB, which can be a time-consuming process for any PDB with a large amount of data.

Asynchronous replication has one important effect on EPAP behavior. PDBA switchover can no longer be forced when the PDBAs are able to communicate and the standby is not current. Switchover involves allowing a definable amount of time for the standby PDB to be brought up to the level of the active PDB. If the standby PDB fails to achieve the equal database level in the allotted time, switchover does not occur and the standby PDB returns with the number of levels still remaining to be replicated. This approach prevents database inconsistency. If the standby PDB cannot reach the active PDB to determine its level, the EPAP allows PDBA switchover to be forced.

Socket-Based Connections

The EPAP receives PDBI messages through a TCP/IP socket. The client application is responsible for connecting to the PDBA well-known port and being able to send and receive the defined messages. The customer’s provisioning system is responsible for detecting and processing socket errors. Tekelec recommends that the TCP keep alive interval on the customer’s socket connection be set to a value that permits prompt detection and reporting of a socket disconnection problem.

There is a limit to the number of PDBI connections; the default is 16 clients. If an attempt is made to connect more than the current client limit, a response is returned to the client:

PDBI_TOO_MANY_CONNECTIONSAfter the response is returned, the socket is automatically closed.

Although the default limit is 16 PDBI connections, Tekelec is able to configure and support up to 128 connections. If more than 16 connections are required, contact My Oracle Support for information.

File Transfer Options

Note:

For the import to work properly, it's necessary that "PDB_RTDB_SYNC" is set to "YES."Check the current value of "PDB_RTDB_SYNC" by running the command:

uiEdit | grep PDB_RTDB_SYNC

If it's set to "NO", set it to "YES" before attempting to import. Run the following command:

uiEdit PDB_RTDB_SYNC YES

Import Files

Manual import and automatic import are the available import file options. Both import options accept data only in the PDBI format.

Valid commands to include in an import file are:

-

ent_sub -

upd_sub -

dlt_sub -

ent_entity -

upd_entity -

dlt_entity -

ent_eir -

upd_eir -

dlt_eir

Do not include

rtrv_sub,

rtrv-entity, or

rtrv_eir commands in an import file.

The inclusion of

rtrv commands causes an import to take

a long time to complete. A write transaction lock is applied during the entire

import for a manual import, and is applied intermittently during an automatic

import. While the write transaction lock is in place during either type of

import, no other updates to the database can be made.

Manual Import

Automatic Import File Setup

The manual import mode is used to import data on a one-time or as-needed basis. The manual import mode is configured with the Import File to PDB Screen. The selected file is processed immediately. A manual import locks the PDB write transaction; other users will not be able to obtain the write transaction until the import operation is complete.

When the PDB is active, the automatic import searches

the

/var/TKLC/epap/free/pdbi_import

directory for new files on a remote system for import every five minutes. If a

file exists in the directory and the file is not being modified or in the

process of being transferred when it is polled, the import will run

automatically at that time. If the file is being modified or is in the process

of being transferred, the automatic import tries again after five minutes.

The automatic import option can import up to 16 files at one time. The number of files imported is limited by the available number of PDBI connections. If more than 16 files exist in the directory, another file is started after a previous file completes until all files have completed. The files are imported sequentially. The results of the import are automatically exported to the remote system specified by the Configure File Transfer Screen.

After the import is complete, the data file is automatically removed and a results file is automatically transferred to the remote system.

An automatic import obtains the PDB write transaction and processes ten of the important file commands. Then the write transaction is released, allowing other connections to provision data. An automatic import obtains the write transaction repeatedly until all of the import file commands are processed.

Automatic Import Status

When using the automatic import function, the following informational banner messages is displayed on the UI browser screen in the Message Box described in Figure 3-21.

Import of <filename> in progress - xx.xx%( while in-progress)Import of <filename> completed (when complete)If the import fails when the PDBA is not running and the

automatic import was started by

cron, the following informational

banner message is displayed on the

UI browser screen in the

Message Box described in

Figure 3-21.

Import of <filename> failed - no PDBAIf the import fails when the connection to the PDBA is lost while the automatic import is in progress, the following informational banner message is displayed on the UI browser screen in the Message Box described in Figure 3-21

Import of <filename> failed - PDBA diedIf an automatic import fails, an automatic retry will occur every five minutes.

Export Files

The manual export and automatic export are the available export file options. Data can be exported in both PDBI and CSV formats. Refer to Provisioning Database Interface Guide for more information. The Manual File Export allows data to be exported to a specified location on a one-time or as-needed basis, and is configured by the Export PDB to File Screen.

If the export file needs to be automatically transferred to a remote server, the file name should start with "pdbAutoExport".

For example: "pdbAutoExport_<hostname>_<YYYYMMDDhhmm>".

For instance, if the following files are present in the

/var/TKLC/epap/free/pdbi_export directory:

pdbExport_Arica-A_202411251131.tar.gz

pdbAutoExport_Arica-A_20241125113147.tar.gz

testPdbFile.tar.gz

Only pdbAutoExport_Arica-A_20241125113147.tar.gz will be

automatically transferred to the remote server (if Configure File Transfer is

enabled). The rest will remain in the directory for manual or other intended use.

Automatic File Export

The Automatic File Export function allows scheduling the data export for a

specific day and time. The export can be scheduled at a specific time for each of

the following repeat periods: every N (up to 365)

number of days, specified days of the week, specified day of the month, or specified

day of the year. The Schedule Export screen displays any existing PDB export tasks

and is used to create a task by specifying the type, export format (PDBI CSV),

export mode (blocking, snapshot, or real-time), the time and repeat period. In

addition, a comment field is available to describe the task. While

performing the "Export PDB to File", the output filename format is pdbAutoExport_<hostname>_<YYYYMMDDhhmmss>, which will save

the file in pdbi_export. The PDBA must be active at the

scheduled time of export for the file to be exported.

Automatic PDB/RTDB Backup

The Automatic PDB/RTDB Backup feature is used to back up all data stored in the PDB or RTDB, including G-Port, G-Flex, INP/AINPQ, A-Port, Migration, V-Flex, and EIR data. The Automatic PDB/RTDB Backup feature automates the process of creating backups of the PDB and RTDB databases at the time, frequency, and to the destination configured by the user. The PDB backup is created on EPAP A and RTDB backup is created on the standby EPAP (A or B). Approximately 17 GB of disk storage space is required per backup.

The following options are available for configuring a destination for the backup file:

- Local - Data is saved to the local disk on the same EPAP server as the PDB or RTDB that is being backed up.

- Mate - Data is created on the local server and then sent using SCP to the mate EPAP server.

- Remote - Data file is created on the local EPAP server and then sent using SFTP to a remote server configured by the user. SFTP must be installed at this remote server. This server may or may not run EPAP software and can be any machine on the network.

For mate or remote backup destinations, an option exists to save a copy of the backup to the local drive. For mate or remote backup destinations, even if the user has selected the option to not save the local copy, the local copy will be saved if the file transfer fails after the backup file has been created on the local machine.

Both the PDB and RTDB backups are scheduled together, but run separately. Based on the input parameters, RTDB backup always starts one hour before of the PDB backup. When setting up the feature, the time that the RTDB backup starts is selected. The PDB backup starts one hour later.

Choose whether to update the configuration on all provisioned and non-provisioned sites. Automatic Backup configuration can be applied in different ways depending on your goal. Select “Yes” when you are configuring Automatic Backup for the first time and want the same settings to be applied across all sites. This lets you update the configuration once and propagate it to all other sites. Select “No” when you only need to change the backup type, time, or frequency for Automatic Backup, without updating the configuration across all sites.

No link exists between backups of one MPS system with backup of the other MPS system. Backups can be scheduled and created only on provisionable pairs. The PDB/RTDB Automatic Backup is not allowed and cannot be scheduled on a non-provisionable pair.

Normal provisioning is allowed during the PDB/RTDB Automatic Backup. This includes provisioning from the customer network to the PDB, provisioning from the PDB to the active EPAP RTDB, and provisioning from the active EPAP RTDB to the Service Module card RTDB. RTDB backups are always created from the standby EPAP RTDB (A or B).

If backup failures occur, alarms and error messages are generated and logged. Two types of backup failures are:

- Backup operation failures: This is a failure to create backup files on either of the systems - local or mate. Two alarms are possible: one for the PDB and one for the RTDB.

- Backup transfer failures: This is the failure to transfer a backup file to the mate or remote site. The backup files exist on the local machine. Two alarms are possible: one for the PDB and one for the RTDB.

A delay of up to five minutes is possible after the scheduled time before the actual start of the scheduled backup.

The effects of cancelling a back up while it is executing are:

- If the automatic backup of the RTDB is in progress, the RTDB backup will complete and the PDB backup will not start.

- If the RTDB backup has completed but the PDB has not started, the PDB backup will not start.

- If the RTDB backup has completed and the PDB has started, PDB backup will complete.

This feature is supported and configured using the Web-based GUI. Refer to Automatic PDB/RTDB Backup for details on setting up the Automatic PDB/RTDB Backup feature.

Note:

If automatic backup is configured, but it fails to update the configuration on the other clients, you will receive a banner message depending on your case.

In Case of Provisionable Client Configuration Failure:

"Automatic PDB Backup Configuration Failed for Remote Provisionable server: #IP#"

In case of Non Provisionable Client Configuration Failure:

a) For less than or equal to 5 Non Prov Clients:

"Automatic RTDB Backup Configuration Failed for Non-provisionable server(s): #IP#"

b) For more than 5 Non Prov Clients:

"Automatic RTDB Backup Configuration Failed for more than 5 Non-provisionable servers"

In such cases, you can reconfigure automatic PDB/RTDB backup for the client in which configuration failed, and remove the Banner message manually by using the below command on the server (CLI):

a) To remove for Prov Client:

manageBannerInfo -d "AUTO_CONNECT_PROV_FAIL"b) To remove for Non Prov Client:

manageBannerInfo -d "AUTO_CONNECT_NONPROV_FAIL" EPAP Automated Database Recovery

The EPAP Automated Database Recovery (ADR) feature is used to restore the EPAP system function and facilitate the reconciliation of PDB data following the failure of the Active PDBA.

The automated recovery mechanism provided by this feature allows one PDBA to become Active when two PDBAs think they are active and have updates that have not been replicated to the mate PDBA. The software selects the PDBA that received the most recent update from its mate to become the Active PDBA; the PDBA that was the Standby most recently becomes the Active. No automatic reconciliation is performed because the system has insufficient information to ensure that the correct actions are taken.

To return the system to normal functionality, a manual PDB copy imust be performed from the PDBA that was chosen to be Active to the PDBA that is in the replication error (REPLERR) state. However, provisioning can be resumed until a maintenance period is available to perform the manual PDB copy.

The Customer Care Center must be contacted before performing the PDBA Copy procedure.

The EPAP Automated Database Recovery feature uses a replication error list that consists of updates that exist as a result of a failure during the database replication process from the active-to-standby PDB. These updates have not been propagated (reconciled) throughout the system and require manual intervention to ensure that the EPAP systems properly process the updates.

EPAP Automated Database Reconciliation Example

Starting with PDBAs in the following current configuration.

Updates 701, 702, and 703 on Node 1 have not been replicated to Node 2.

Table 2-9 Example 1 EPAP ADR

| Active PDBA (Node 1) | Standby PDBA (Node 2) |

|---|---|

|

DB Level-704 |

DB Level-700 |

|

Updates to replicate to standby PDBA |

|

|

701 |

|

|

702 |

|

|

703 |

Assume that a fault that takes down the Node 1 PDBA before the replication process is complete. Node 2 has become the Active PDBA and is now receiving provisioning updates.

Table 2-10 Example 2 EPAP ADR

| Failed PDBA (Node 1) | Active PDBA (Node 2) |

|---|---|

|

DB Level-704 |

DB Level-700 |

|

Updates on replication error list |

Processing DB Level updates |

|

701 |

701 (different than 701 on node 1) |

|

702 |

702 (different than 702 on node 1) |

|

703 |

703 (different than 703 on node 1) |

-

Updates 701-703 on Node 1 have not been replicated to Node 2.

-

Updates 701-703 on Node 1 are different from updates 701-703 on Node 2.

The PDBA that received an update with the latest timestamp (in this example, Node 2 PDBA) will automatically become the Active PDBA and continue accepting provisioning updates. The Node 1 PDBA is put in a REPLERR state and PDBA Replication Failure alarm initiates on Node 1 PDBA.

A replerr file (REPLERR PDBA) with the replication log lists from the Node 1 PDBA is created. This file contains the lost Node 1 provisioning updates (701-703) and is in the format of a PDBI import file. The customer can examine the file and decide whether to reapply these updates to the Active PDBA.

After the Node 1 PDBA becomes available, the customer must temporarily suspend provisioning and perform a PDB copy of the Node 2 PDBA to the Node 1 PDBA to reconcile the PDBs before the Node 1 PDBA can be made active again.

The EPAP Automated Database Recovery feature is enabled through the text-based EPAP user interface.

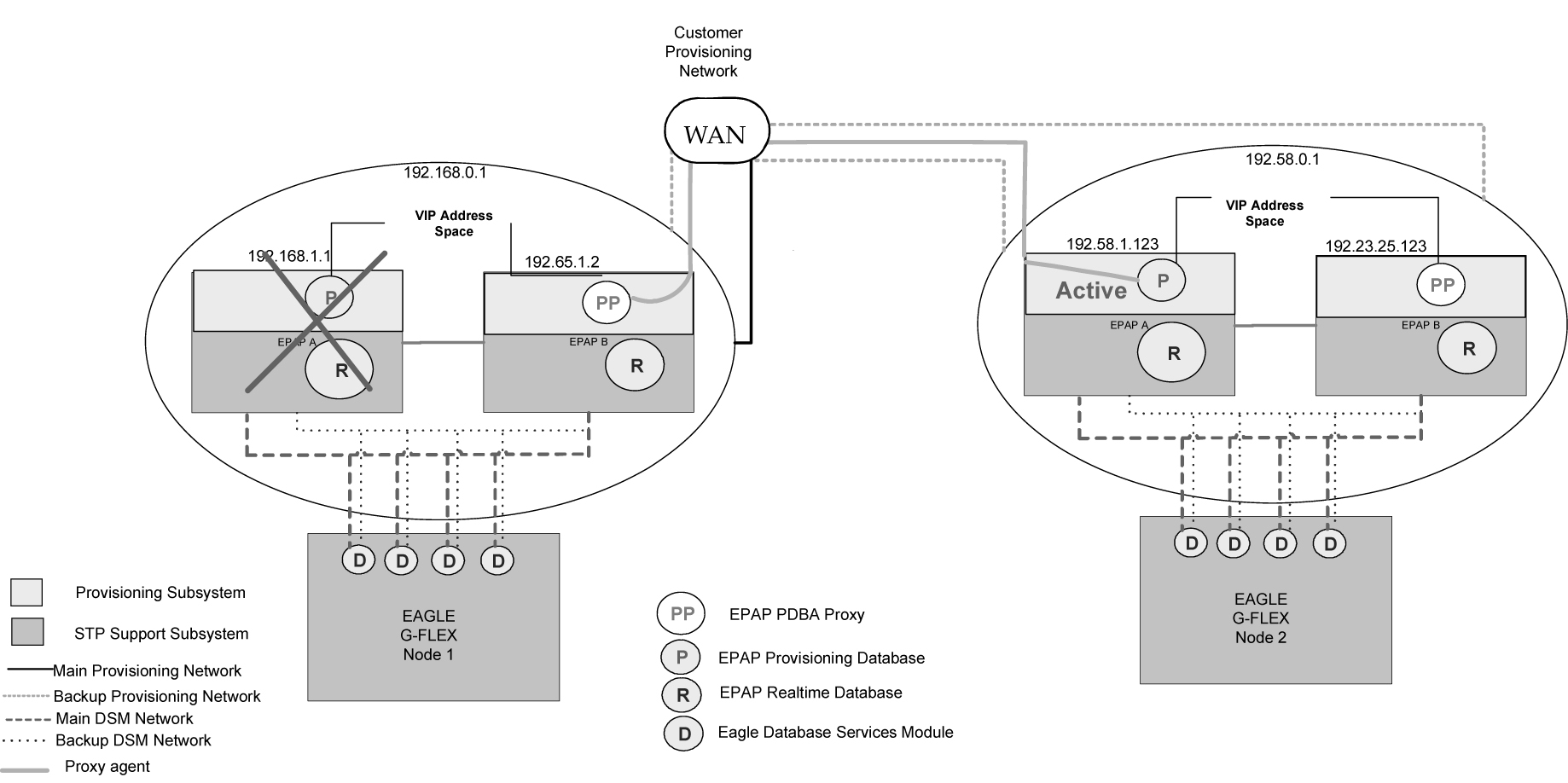

EPAP PDBA Proxy Feature

The EPAP PDBA Proxy feature allows operators to maintain the existing (active) provisioning VIP address connection to the EPAP PDBA in the event of an active PDBA failure. The advantages of this feature are:

- Seamless, uninterrupted VIP connection for provisioning data to the standby EPAP PDBA if the active PDBA fails

- No need to perform a manual switchover to the standby PDBA or change the provisioning VIP address in operator provisioning software

- A means of reconciling both PDBAs when the failed PDBA becomes available again

The EPAP system uses two provisioning VIP addresses: one VIP for the local or active EPAP and one VIP for the remote or standby EPAP. However., provisioning can be performed only on the active provisioning VIP address. The EPAP PDBA Proxy feature maintains the existing, active provisioning VIP address connection to the previously active Node when the active PDBA fails. The previously active EPAP B on that Node proxies provisioning data to the temporarily active PDBA on the other Node.

With the EPAP PDBA Proxy feature, PDBA connection redundancy is accomplished by allowing the customer’s provisioning system to maintain the connection to the previously active EPAP B using the active provisioning VIP address, even though the connection may be logically, by-proxy, to the previously standby PDBA on the other Node. When the previously active PDBA recovers, that PDBA is aware that the standby PDBA has become active and the PDBAs need to be reconciled.

EPAP PDBA Proxy Feature Requirements/Limitations

Some requirements and limitations of the EPAP PDBA Proxy feature are:

- The customer provisioning system loses connection to the PDBA:

- When the EPAP PDBA Proxy feature is first initiated upon server failure and switchover to the backup PDBA

- During recovery when service is being restored to the PDBA on the sever that had failed

When the connection is lost, it must be re-established to the same VIP address.

- Both provisioning VIP addresses (the local or active EPAP VIP and the remote or standby EPAP VIP) must be configured using the text-based EPAP user interface. See Configure Provisioning VIP Addresses.

- The Change PDBA Proxy State procedure must be performed on both active and standby MPS-A PDBAs. See Change PDBA Proxy State.

- The EPAP PDBA Proxy feature does not provide PDBA connection redundancy in the case of PDBA (application) failure. The EPAP PDBA Proxy feature provides PDBA connection redundancy only after failure of the active EPAP A.

- The EPAP PDBA Proxy feature does not initiate in response to a Switchover PDBA State. A manual switchover requires the operator to change the provisioning VIP address in operator provisioning software.

- The EPAP PDBA Proxy feature does not require activation of the Automated Database Recovery (ADR) feature

- The EPAP PDBA Proxy feature is not supported for Standalone PDB EPAP.

- SSH tunneling does not work with the PDB proxy feature as SSH tunneling works with server IP and not with VIP.

Note:

If a replication error (REPLERR) occurs while using the EPAP PDBA Proxy feature, manual intervention is required. Contact My Oracle Support.EPAP PDBA Proxy Example

During normal provisioning operations:

-

Operator provisioning system sends provisioning updates to the Active PDBA on Node 1 using the active provisioning VIP address (192.168.0.1).

-

Active PDBA checks syntax and writes to the Active PDB.

-

Active PDBA writes to a replication log on the EPAP A and local EPAP B.

-

Active PDBA sends an ACK response to the operator provisioning system.

-

Standby PDBA on Node 2 queries the EPAP A replication logs on Node 2 and updates the PDB on Node 2.

A fault (server failure) on the Node 1 with the active PDBA is shown in Figure 2-5:

- With the EPAP PDBA Proxy feature enabled, the system continues using the active provisioning VIP address (192.168.0.1).

Note:

During the switchover to the PDBA on Node 2, the customer provisioning system loses connection to the PDBA. The connection must be re-established to the same VIP address. - If the Standby PDBA on Node 2 is reachable, EPAP B on Node 1 transmits replication logs to the Node 2 PDBA.

- When the Node 2 PDBA has all the replication logs from the EPAP B on Node 1, the Node 2 PDBA becomes the Active by-proxy PDBA.

- Operator provisioning continues using provisioning VIP address 192.168.0.1 because EPAP B on Node 1 proxies provisioning data to the newly Active PDBA on Node 2.

Figure 2-5 Failure of Active PDBA

When the Node 1 PDBA is restored to service:

- Updates sent to Node 2 PDBA while the Node 1 PDBA was down are forwarded to the Node 1 PDB.

- Records are replicated. Node 1 becomes the Active PDBA and Node 2 reverts to Standby status.

Note:

During recovery when the PDBA on Node 1 is being restored to service, the customer provisioning system loses connection to the PDBA. The connection must be re-established to the same VIP address.

This feature is enabled through the text-based EPAP user interface. See PDB Configuration Menu.

Allow Write Commands on EPAP During Retrieve/Export Feature

This feature allows an EPAP user to provision data via the GUI or PDBI while simultaneously performing a data export using the GUI or PDBI. The three modes of operation are:

-

Blocking mode - Blocks all write requests while an export is in progress.

-

Snapshot mode - Allows writes to continue during the export, and provides the export as a complete snapshot of the database at the time the export started. Changes made to the database after export has started are not reflected in the export file. This mode provides a file that is the most useful for importing into the database at a later time.

Note:

This mode causes the server to run increasingly slower as updates are received on the other connections. -

Real time mode - Allows writes to continue during export, but provides the export file in real-time fashion rather than as a snapshot. Changes to the database after the export has started may or may not be reflected in the export file, depending upon whether the changes are to an area of the database that has already been exported. This mode provides a file that can be imported into the database at a later time, but is less useful because it is not a complete snapshot of the database at a given time.

EPAP 30-Day Storage or Export of Provisioning Logs Feature

The EPAP 30-Day Storage or Export of Provisioning Logs feature allows the EPAP to store provisioning logs on the EPAP hard drive for a configurable interval provided the disk partition does not become full within that interval. This feature also allows configuration of storage time for error logs and debug logs.

Table 2-11 Log Storage Intervals

| Log Type | Configurable Storage Range | Default Value |

|---|---|---|

|

Provisioning Logs |

1 to 30 days |

1 day |

|

Error Logs |

1 to 30 days |

1 day |

|

Debug Logs |

1 to 7 days |

1 day |

Alarms notify the user when the log disk is 80% or 90% full. The default threshold value is 95%.

-

If the disk where the logs are stored becomes 80% full before the configured time period has elapsed, the EPAP issues a minor alarm. No files are removed at this point.

-

If the disk where the logs are stored becomes 90% full before the configured time period has elapsed, the EPAP issues a major alarm. No files are removed at this point.

-

If the disk where the logs are stored becomes 95% full before the configured time period has elapsed, the EPAP issues a critical alarm. The EPAP will begin removing the oldest entries in the logs to free disk space for new entries.

-

The alarms are cleared when the disk space in use decreases to less than 80% or 90%, respectively.

Note:

The 80%, 90% and 95% alarms do not coexist. If the 80% alarm is active when the 90% alarm is triggered, the 80% alarm is replaced by the 90% alarm until the 90% alarm clears. After the 90% alarm clears, the 80% alarm remains active until it is cleared. The 90% and 95% alarms have the same functionality. If the 90% alarm is active when the 95% alarm is triggered, the 90% alarm is replaced by the 95% alarm until the 95% alarm clears, etc.

EPAP User Interface

The EPAP provides two user interfaces that consist of sets of menus for configuration, maintenance, debugging, and platform operations. When a menu item is chosen, the user interface directs the system to perform the requested action.

-

Graphical User Interface

The Graphical User Interface (GUI) provides menus to perform routine operations that maintain, debug, and operate the platform. A PC with a network connection and a Web browser is used to communicate with the EPAP GUI. Overview of EPAP Graphical User Interface (GUI) describes GUI login, menu items, and associated outputs.

-

Text-based User Interface

The text-based User Interface provides the Configuration menu to initialize and configure the EPAP. The text-based User Interface is described in EPAP Configuration Menu. For information about configuring the EPAP and how to set up a PC workstation, refer to Setting Up an EPAP Workstation.

Service Module Card Provisioning

One of the core functions of the EPAP is to provision the Service Module cards with database updates.

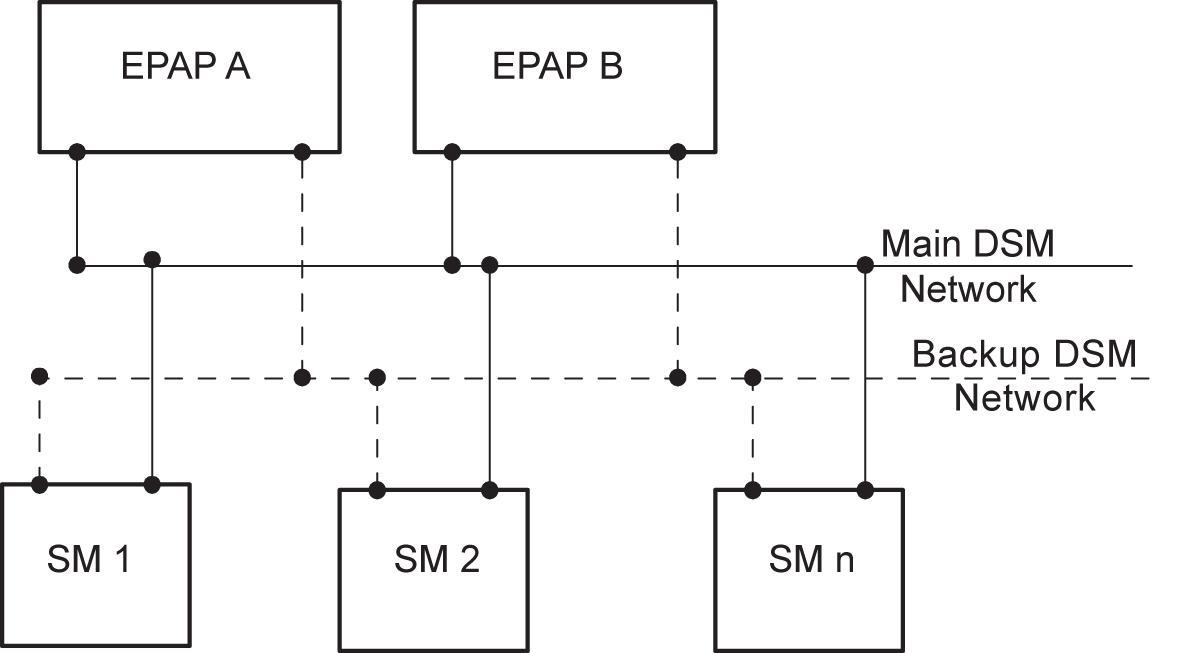

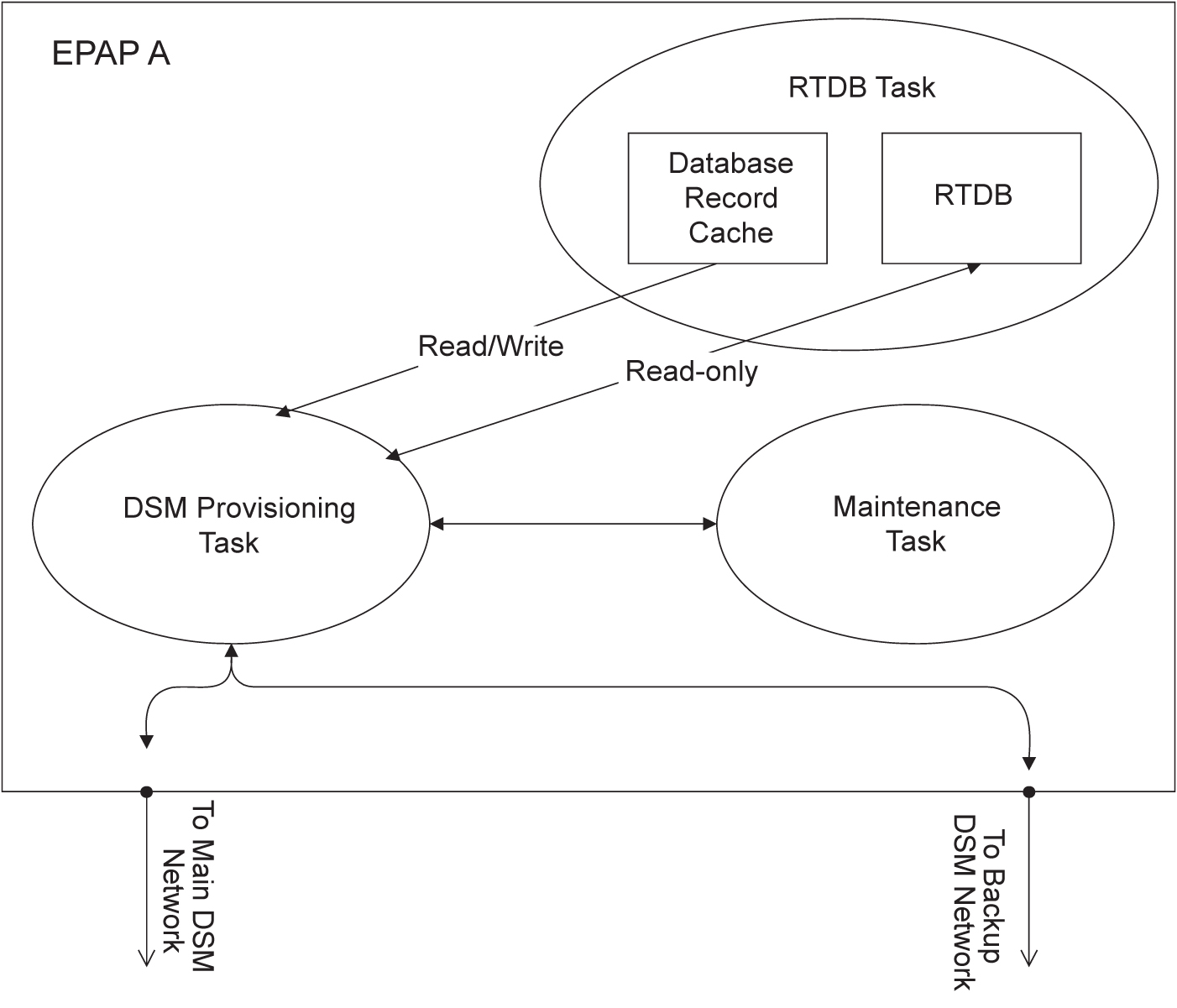

The task resides on both EPAP A and EPAP B. It communicates internally with the real-time database (RTDB) task, and the EPAP maintenance task. The DSM provisioning task broadcasts provisioning data to connected Service Module cards across two Ethernet networks. See Network Connections. The DSM provisioning network architecture is shown in Figure 2-6 and the DSM provisioning task interface is shown in Figure 2-7.

Figure 2-6 DSM Provisioning Network Architecture

Figure 2-7 DSM Provisioning Task Interfaces

In order to handle the redundancy requirements for this feature, a separate RMTP channel is created on each interface from each EPAP:

-

EPAP A, Main DSM network

-

EPAP A, Backup DSM network

-

EPAP B, Main DSM network

-

EPAP B, Backup DSM network

Provisioning and other data is broadcast on one of these channels to all of the Service Module cards. Provisioning is done by database level in order to leave Service Module card tables coherent between updates.

In addition to a constant stream of current updates, it is necessary to provision back-level Service Module cards with incremental update streams that use the same delivery mechanism as the current provisioning stream.

Provisioning Model

For the purpose of this discussion, provisioning originates from the PDB task in coordination with the RTDB task. At initiation, the provisioning task initiates a session with the RTDB using a null database level. The RTDB initializes the session using the actual current database level. At regular 1.5 second intervals, the provisioning task sends a data request to the RTDB. The RTDB responds even if the no new data is available. The provisioning task sends a provisioning message on the DSM network.

Incremental Loading Model

Incremental loading occurs when a Service Module card has missed some updates, but does not need a complete reload.

The Service Module card detects that the current database level is higher than the update it expected, and indicates its current DB level to the maintenance task. The maintenance task requests that the DSM provisioning task begin a new incremental loading stream at the requested Service Module card level.

Once an incremental loading stream is set up, the following incremental loading transaction is repeated until the Service Module cards reach the current RTDB level:

The DSM provisioning task requests records associated with the database level for this stream. The RTDB task returns records associated with that level and sequentially higher levels (up to the maximum message size or the current RTDB level). The DSM provisioning task provisions the Service Module cards with the records.

Note:

Incremental loading and normal provisioning are done in parallel. The DSM provisioning task supports up to five incremental loading streams in addition to the normal provisioning stream.Incremental reload streams are terminated when the database level contained in that stream matches that of another stream. This is expected to happen most often when the incremental stream “catches up to” the current provisioning stream. Service Module cards accept any stream with the “next” sequential database level for that card.

Service Module Card Reload

The stages of database reload for a given Service Module card are given the following terminology:

Stage 1 loading - The database is being copied record for record from the Active EPAP to the Service Module card RTDB. The database is incoherent during stage 1 loading.

Incremental update – The database is receiving all of the updates missed during stage 1 loading or some other reason (such as network outage, processor limitation, or lost communication). The database is coherent but back level during incremental update.

Current – The database is receiving current updates from the DSM provisioning task.

Coherent – The database is at a whole database level; it is not currently updating records belonging to a database level.

Service Module cards may require a complete database reload in the event of reboot or loss of connectivity for a significant amount of time. The EPAP provides a mechanism to quickly load a number of Service Module cards with the current database. The database on the EPAP is large and may be updated constantly. The database sent to the Service Module card or cards will likely be missing some of these updates making it corrupt as well as back level. The upload process is divided in to two stages, one to sequentially send the raw database records and one to send all of the updates missed since the beginning of the first stage.

The Service Module card reload stream uses a separate RMTP channel from the provisioning and incremental update streams. This allows DSM multicast hardware to filter out the high volume of reload traffic from Service Module cards that do not require it.

Continuous Reload

The EPAP handles reloading of multiple Service Module cards from different starting points. Reload begins when the first Service Module card requires it. Records are read sequentially from the real-time database from an arbitrary starting point, wrapping back to the beginning. If another Service Module card requires reloading at this time, it uses the existing record stream and notifies the DSM provisioning task of the first record it read. This continues until all Service Module cards are satisfied.

Service Module Card Database Levels and Reloading

The current database level when the reload started is of special importance during reload. When a Service Module card detects that the last record has been received, it sends a status message back to the EPAP indicating the database level at the start of reload. This action will start incremental loading. The Service Module card cannot, however, use the database until the DB level reaches the database level at the end of reload. As real-time database records are sent to the Service Module cards during reload, normal provisioning can change those records. All of the records affected between the start and end of reloading must be incrementally loaded before the database is coherent.

MPS/Service Module Card RTDB Audit Overview

General Description

The fact that the EPAP advanced services use several databases, some of which are located on different platforms, creates the need for an audit that validates the contents of the different databases against each other. The audit runs on both MPS platforms to validate the contents of the Provisioning Database (PDB) and Real-time DSM databases (RTDB). The active EPAP machine validates the database levels for each of the Service Module cards. Refer to Figure 2-8 for the MPS hardware interconnection diagram.